eFabric™ is a "Unified ML Factory" designed for Edge AI. It is a low-code / no-code platform designed to build and train Artificial Intelligence models from raw data and directly deploy onto Syntiant’s Neural Decision Processors (NDP) and Renesas RZ/V2L family chips.

Key Capabilities

eFabric consolidates the entire AI lifecycle from dataset management to training and deployment into a single seamless workflow, eliminating the need for external tools.

It bridges the gap between data science and embedded engineering, allowing developers to deploy hardware-ready models in minutes without complex firmware coding.

Creates models specifically tuned for "always-on" battery-powered devices (microwatt scale) rather than general cloud AI.

eFabric eliminates deployment surprises by ensuring that components like the AI model and edge hardware used during testing & validation are carried into production environment.

A GUI-driven experience that allows software developers to deploy hardware-ready models without deep hardware knowledge.

Accelerate time-to-market. Single streamlined workflow from dataset import to silicon deployment. No need for complex toolchains and SDK coding.

Build models for power-efficient and memory-constrained chips as well as embedded Linux MPU chips.

Accelerate development by starting with EVK based PoC, then scale seamlessly to production with our production-ready SoM.

Fast-track your product launch by embedding our production-ready SoM to deliver autonomous edge intelligence.

A seamless journey from raw data to deployed silicon, consolidated into a single intuitive interface.

Import, organize, label datasets and manage in a Project.

Configure pre-processing and

feature extraction.

Choose an existing model architecture or design your own.

Live training metrics, logs,

and validation.

Export optimized model &

flash to chip.

Improve models using refined datasets and optimized parameters.

Track, analyze, and optimize

Model performance.

Zero Friction Handover: From Cloud to Edge

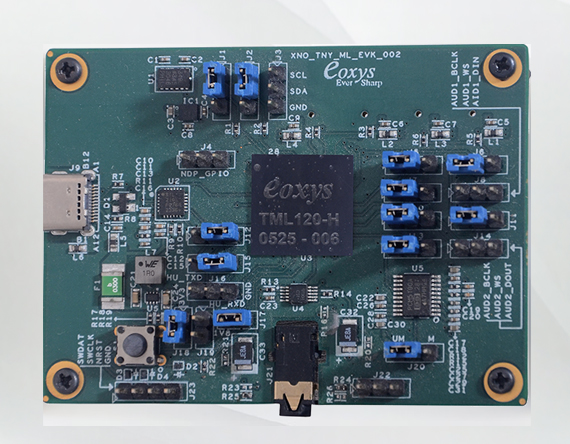

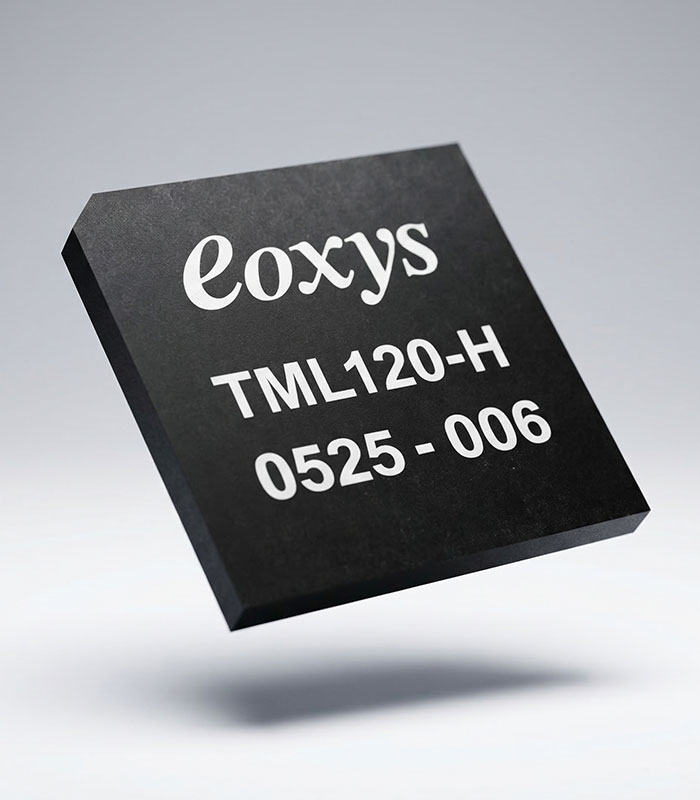

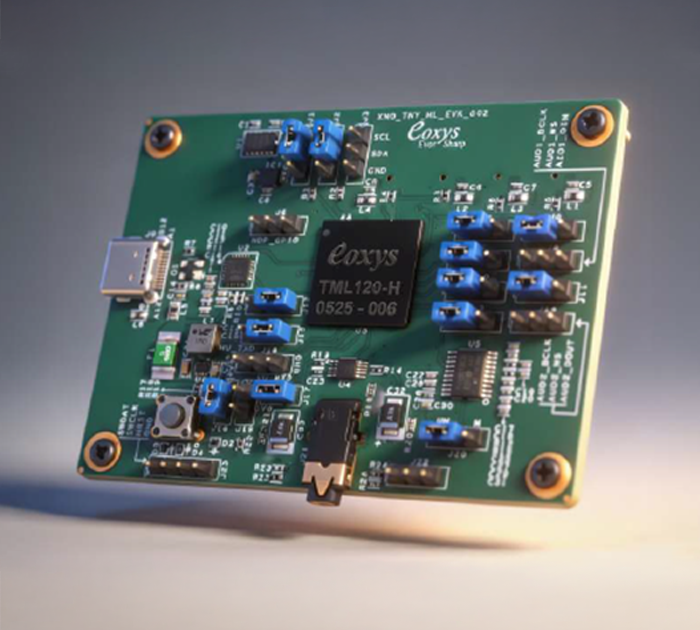

Featured Product: TML120 and R2L100 Evaluation Kit.

TML120 is a XENO+ Series Tiny ML Module which is a solderable module and is designed for building audio- and sensor-based Edge AI IoT devices

| Features | Description |

|---|---|

| MCU | ARM Cortex-M23 |

| Memory | 8MB NOR Flash |

| Audio-0 Interface | Audio port interface for external PDM Mics |

| Audio-1 Interface | PDM/I2S/TDM for external Audio input from Mic/audio |

| Audio-2 Interface | I2S/TDM for Audio output to be sent to Audio processor |

| I2C Interface | I2C interface for connecting any sensors for tme-series-data input |

| GPIO | 3x GPIOs from ML processor |

| Programming Interface | Serial UART-based programming interface with TML flashing tool |

| Host Interface | Serial UART-based interface to report ML classification events to host processor |

| Size | 15 x 15 mm |

| Operating Temp | -40 to 85 °C |

| Module Supply Voltage | 1.8V / 3.3V |

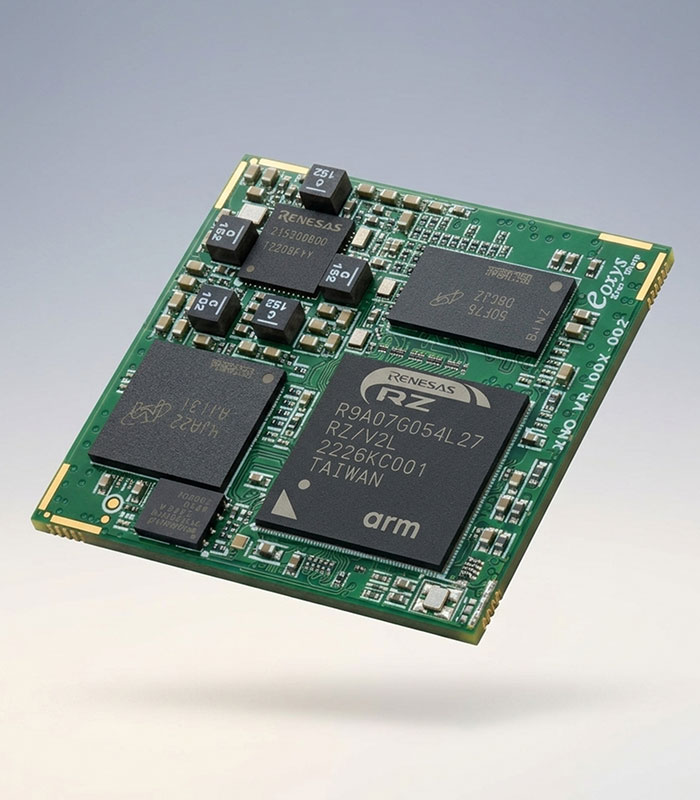

XENO+ Vision ML (Machine Learning) SOM (System-On-Module) module is a production ready solderable SOM Module and can be used as core CPU module for building Linux OS based Video/Image based edge AIML devices.

| Features | Description |

|---|---|

| Main CPU | Dual ARM Cortex A55 @ 1.2GHz |

| MCU | ARM Cortex M33 @ 200MHz |

| RAM | 16Bit 2GB DDR4-1600 |

| Flash | 16GB eMMC Flash (Up to 32GB) |

| Serial Flash | 16MB Serial NOR Boot flash |

| ISP | Simple ISP |

| AI Accelerator | DRP-AI Accelerator |

| Video CODEC | H.264 Enc/Dec 2K/30fps |

| OS | Linux OS |

| Features | Description |

|---|---|

| Graphics Engine | ARM Mali-G31 3D GPU |

| Camera Interface | 1× MIPI CSI-2 (4 lanes) |

| Display Interface | 1× MIPI DSI (4 lanes) |

| Ethernet | 2× RGMII Gigabit Ethernet interface |

| SD Interface | 1× SD interface for WiFi module or SD Card |

| USB | 2× USB2.0 for Camera or LTE module |

| Others | 4× I2C, 5× UART, 2× SPI, 8× ADC, 2× CAN-FD |

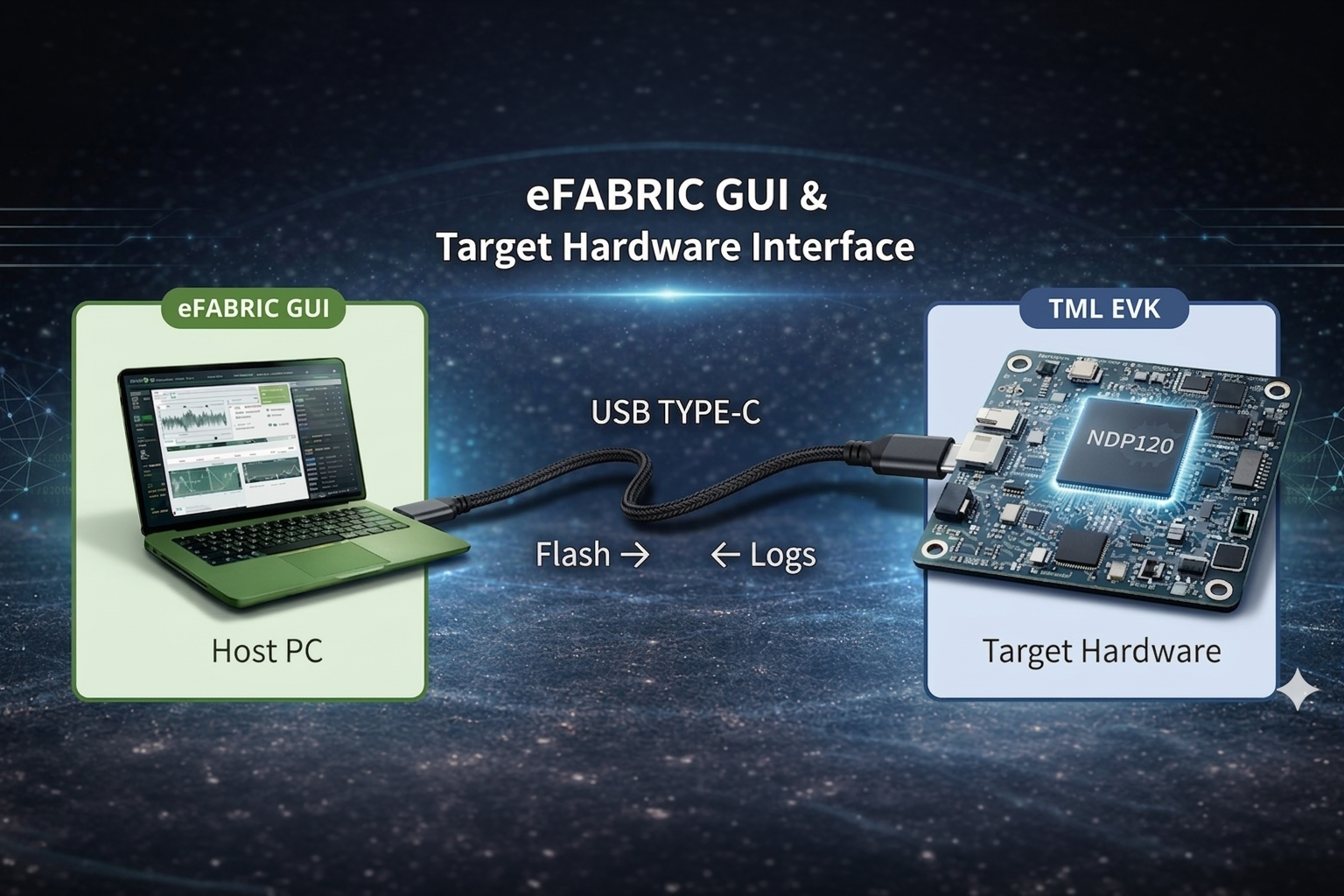

eFabric connects seamlessly to TML and R2L100 EVKs via a simple USB interface for instant model testing.

Flash the optimized model binary to the TML/R2L100 EVKs in seconds directly from the GUI.

Validate edge-AI performance on actual hardware with live confidence scores and logs.

Verify latency, power profiles, and accuracy in the real-world deployment environment.

Seamlessly transition validated models to high-volume manufacturing with optimized deployment toolsets.

Built for the Syntiant NDP and Renesas RZ/V Series edge AI Linux MPUs, for Audio/Vison/SLM

Rapidly expanding to support the

full spectrum of

ultra-low-power chips and other hardware, coming soon.

Rapidly expanding to support the

full spectrum of

ultra-low-power chips and other hardware, coming soon.

Perfect For

eFabric excels in Audio Classification, enabling developers to build robust models for complex auditory environments.

Identify and classify diverse environmental sounds and events.

Detect specific trigger words or phrases with high accuracy and low latency.

Classify background noise and suppress the noise for clear audio

Classify industrial machine sound patterns for operational health.

Enable new application domains by supporting the generation of sensor-based machine learning models, allowing eFabric to handle complex temporal data patterns and anomaly classification.

Accelerometer-based activity tracking and movement classification.

Precise hand-gesture recognition for touchless control interface.

Classify vibration patterns for anomaly detection in machinery.

RUL (Remaining Useful Life) & SOH (State of Health) prediction.

Perfect For

Perfect For

eFabric has evolved into a unified ML factory for all edge modalities. Next-wave enhancements enable advanced computer vision directly on edge chips.

Secure identity verification and access control on low-power devices.

Real-time occupancy tracking for smart buildings and retail.

Automated anomaly detection and vision-based threat monitoring.

One-platform for Audio, Sensor, and Vision model generation.